Without autoscaling, you’d choose between two bad options: pay for enough GPUs to handle your peak traffic 24/7, or accept that requests fail when load exceeds your fixed capacity. Autoscaling eliminates this tradeoff by adjusting the number of replicas backing a deployment based on demand. When traffic rises, the autoscaler adds replicas. When it falls, it removes them. The goal is to match capacity to load so you pay for what you use without sacrificing latency. Baseten bills per minute while a replica is deploying, scaling, or serving requests. A deployment scaled to zero replicas incurs no charges, but the wake-up period when a new request arrives is billable. For details on minimizing that startup cost, see Cold starts.Documentation Index

Fetch the complete documentation index at: https://docs.baseten.co/llms.txt

Use this file to discover all available pages before exploring further.

Reference

Reference

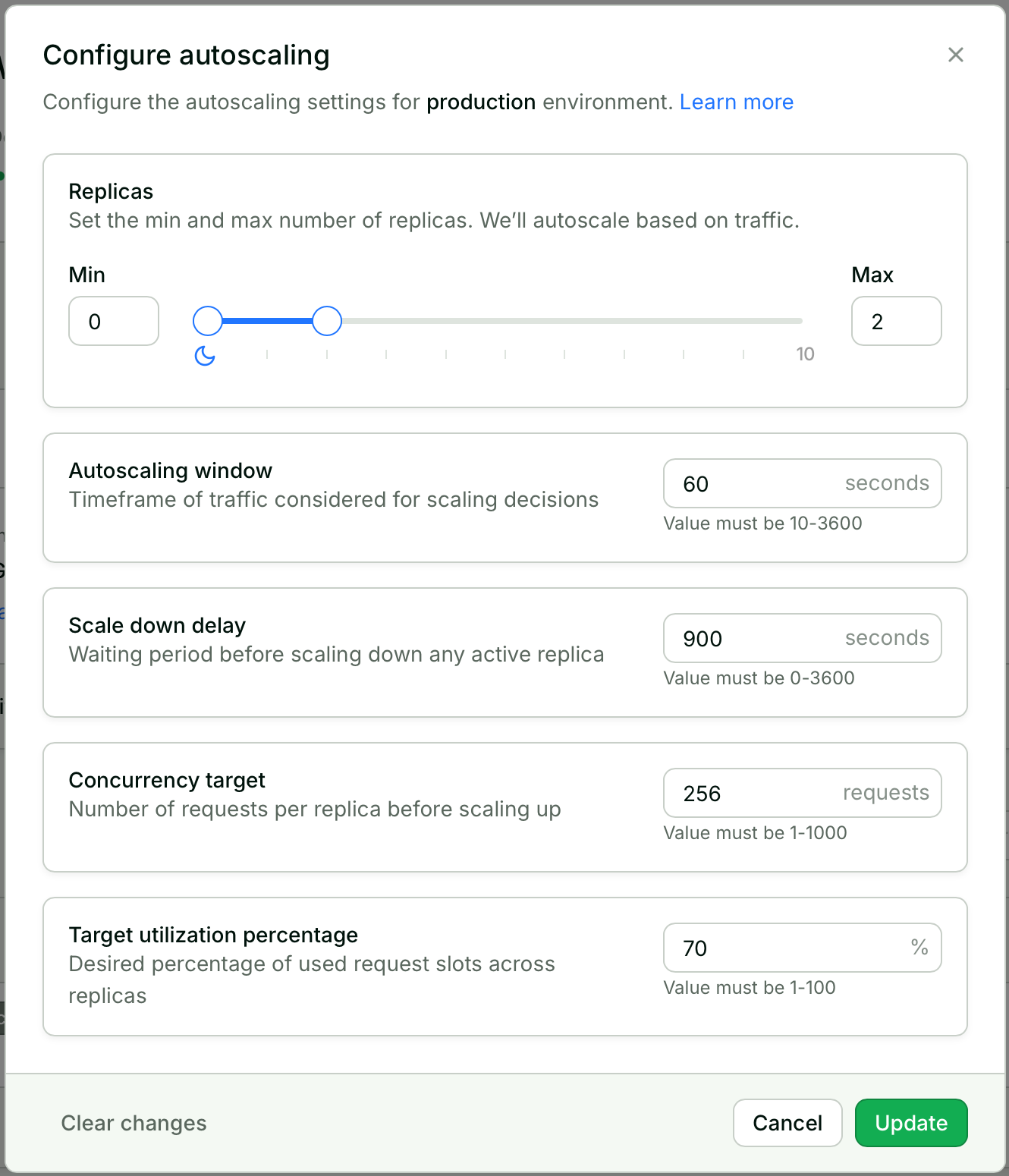

Baseten provides default settings that work for most workloads.

Tune your autoscaling settings based on your model and traffic.

| Parameter | Default | Range | What it controls |

|---|---|---|---|

| Min replicas | 0 | ≥ 0 | Baseline capacity (0 = scale to zero). |

| Max replicas | 1 | ≥ 1 | Cost/capacity ceiling. |

| Autoscaling window | 60s | 10–3600s | Time window for traffic analysis. |

| Scale-down delay | 900s | 0–3600s | Wait time before removing idle replicas. |

| Concurrency target | 1 | ≥ 1 | Requests per replica before scaling. |

| Target utilization | 70% | 1–100% | Headroom before scaling triggers. |

- UI

- cURL

- Python

- Select your deployment.

- Under Replicas for your production environment, choose Configure.

- Configure the autoscaling settings and choose Update.

Show the configure-autoscaling panel

Show the configure-autoscaling panel

How autoscaling works

The autoscaler matches replica count to demand by sampling in-flight requests once everyautoscaling_window (60 seconds by default). It averages the load over that window, divides by each replica’s effective capacity (concurrency_target × target_utilization_percentage), and rounds up to set the desired replica count. Scaling up happens at the next decision, but scaling down is deliberately patient: load has to stay below the threshold for an entire scale_down_delay before the autoscaler halves the excess, and it keeps halving on each subsequent delay rather than dropping replicas all at once. That asymmetry is what keeps the deployment from oscillating when traffic dips and recovers. Shape a traffic pattern in the simulator below to watch each of those decisions land on the chart.

To put numbers on it, consider a deployment with concurrency_target set to 10 and target_utilization_percentage at 70%. Each replica’s effective capacity is 7 concurrent requests (10 × 0.70), which is the threshold the autoscaler compares the windowed average against. If traffic jumps from 5 to 25 in-flight requests over an autoscaling_window, the autoscaler computes ⌈25 / 7⌉ = 4 replicas at the next decision boundary and begins provisioning them; on the chart above, each scale-up shows up as a triangle on the time axis and a step in the green replica line. Replicas keep coming online until the deployment hits max_replica, after which any additional load queues until capacity frees up.

Scaling down works in the opposite direction at a deliberately slower pace. When the windowed load drops below the threshold, the autoscaler waits an entire scale_down_delay (900 seconds by default in production) before acting, and then removes only ⌈excess / 2⌉ replicas rather than the whole surplus. The timer resets after that halving, so a deployment with eight excess replicas drains to four, then two, then one, with a full delay between every step. That exponential back-off is what prevents oscillation: if traffic briefly dips and recovers within the delay, the replicas stay warm and no scale event fires. Scale-down also clamps at min_replica, which most production deployments hold at two or more so a healthy replica is always available.

Replicas

Each replica is an independent instance of your model, running on its own hardware and capable of serving requests in parallel with other replicas. The autoscaler controls how many replicas are active at any given time, but you set the boundaries.The floor for your deployment’s capacity. The autoscaler won’t scale below this number.Range: ≥ 0The default of 0 enables scale-to-zero: when no requests arrive for long enough, all replicas shut down and your deployment incurs no charges. The tradeoff is that the next request triggers a cold start, which can take minutes for large models. During that wake-up period, billing is per minute even though the replica isn’t yet serving responses.

For production deployments, set

min_replica to at least 2. This eliminates cold starts and provides redundancy if one replica fails.The ceiling for your deployment’s capacity. The autoscaler won’t scale above this number.Range: ≥ 1This setting protects against runaway scaling and unexpected costs. If traffic exceeds what your maximum replicas can handle, requests queue rather than triggering new replicas. See Request lifecycle for details on queuing and load shedding behavior. The default of 1 effectively disables autoscaling: you get exactly one replica regardless of load.Estimate max replicas:

Scaling triggers

The autoscaler decides when a replica is “full” by comparing in-flight requests against a per-replica threshold.concurrency_target caps how many simultaneous requests each replica accepts, and target_utilization_percentage cuts the threshold lower so the autoscaler can trigger a scale-up before any replica is completely saturated, leaving room for new replicas to come online without queueing requests in the meantime. Scale-up fires when:

The diagram below shows a replica with concurrency_target of 8 and target_utilization of 50%, so the per-replica threshold sits at 4. The first four requests fill capacity within headroom; the fifth crosses the threshold, and the autoscaler provisions a second replica to absorb the overflow before the remaining slots saturate.

How many requests each replica can handle simultaneously. This directly determines replica count for a given load.Range: ≥ 1Given the current load, the autoscaler calculates desired replicas:In-flight requests are requests sent to your model that haven’t returned a response (for streaming, until the stream completes). This count is exposed as

baseten_concurrent_requests in the metrics dashboard and metrics export.The right value depends on how your model uses hardware. Image generation models that consume all GPU memory per request can only process one at a time, so a concurrency target of 1 is correct. LLMs and embedding models batch requests internally and can handle dozens simultaneously, so higher targets (32 or more) reduce cost by packing more work onto each replica.Tradeoff: Higher concurrency = fewer replicas (lower cost) but more per-replica queueing (higher latency). Lower concurrency = more replicas (higher cost) but less queueing (lower latency).| Model type | Starting concurrency |

|---|---|

| Standard Truss model | 1 |

| vLLM / LLM inference | 32–128 |

| SGLang | 32 |

| Text embeddings (TEI) | 32 |

| BEI embeddings | 96+ (min ≥ 8) |

| Whisper (async batch) | 256 |

| Image generation (SDXL) | 1 |

Concurrency target controls requests sent to a replica and triggers autoscaling.

predict_concurrency (Truss config.yaml) controls requests processed inside the container.

Concurrency target should be less than or equal to predict_concurrency.

See the

predict_concurrency field in the Truss configuration reference for details.Headroom before scaling triggers. The autoscaler scales when utilization reaches this percentage of the concurrency target, not when replicas are fully loaded.Range: 1–100%The effective threshold is:With a concurrency target of 10 and utilization of 70%, scaling triggers at 7 concurrent requests (10 x 0.70), leaving 30% headroom for absorbing spikes while new replicas start.Lower values (50-60%) provide more headroom for spikes but cost more. Higher values (80%+) are cost-efficient for steady traffic but absorb spikes less effectively.

Scaling dynamics

Once the autoscaler decides to scale, two settings control the pace.autoscaling_window determines how much history feeds into each decision, so a longer window averages out short spikes while a shorter one reacts to traffic changes faster. scale_down_delay gates removal in the other direction by holding replicas warm even after load drops, so a brief dip does not trigger a teardown that the next request would have to wait through. Together, the two settings tune the tradeoff between responsiveness and stability. The diagram below shows traffic falling to zero, the idle timer filling up, and the replica being reclaimed only once the timer crosses the scale_down_delay threshold.

How far back (in seconds) the autoscaler looks when measuring traffic. Traffic is averaged over this window to make scaling decisions.Range: 10–3600 secondsA 60-second window smooths out momentary spikes by averaging load over the past minute. Shorter windows (30-60s) react quickly to traffic changes, which suits bursty workloads. Longer windows (2-5 min) ignore short-lived fluctuations and prevent the autoscaler from chasing noise.

How long (in seconds) the autoscaler waits after load drops before removing replicas.Range: 0–3600 secondsWhen load drops, the autoscaler starts a countdown. If load stays low for the full delay, it removes replicas using exponential back-off (half the excess, wait, half again). If traffic returns before the countdown finishes, the replicas stay active and the countdown resets.This is your primary lever for preventing oscillation. If replicas repeatedly scale up and down, increase this value first.

Development deployments

Development deployments are designed for iteration, not production traffic. Replicas are fixed at 0-1 to match thetruss watch workflow, where you’re testing changes on a single instance rather than handling concurrent users. You can still adjust timing and concurrency settings.

| Setting | Value | Modifiable |

|---|---|---|

| Min replicas | 0 | No |

| Max replicas | 1 | No |

| Autoscaling window | 60 seconds | Yes |

| Scale-down delay | 900 seconds | Yes |

| Concurrency target | 1 | Yes |

| Target utilization | 70% | Yes |

Next steps

Traffic patterns

Identify your traffic pattern and get recommended starting settings.

Cold starts

Understand cold starts and how to minimize their impact.

API reference

Complete autoscaling API documentation.

Engine-specific autoscaling

Recommended settings for BEI and Engine-Builder-LLM with dynamic batching.